More about S3 Storage

- S3 Intelligent-Tiering's Archive Instant Access Tier

- Amazon S3 Storage Lens: A Single Pane of Glass for S3 Storage Analytics

- S3 Access: How to Store Objects With Different Permissions In the Same Amazon S3 Bucket

- S3 Lifecycle Rules: Using Bucket Lifecycle Configurations to Reduce S3 Storage Costs

- S3 Storage: The Complete Guide

- S3 Pricing Made Simple: The Complete Guide

- How to Copy AWS S3 Objects to Another AWS Account

- Amazon S3 Bucket Security: How to Find Open Buckets and Keep Them Safe

- How to Test and Monitor AWS S3 Performance

- How to Secure S3 Objects with Amazon S3 Encryption

- How to Secure AWS S3 Configurations

- Comparing AWS SLAs: EBS vs S3 vs Glacier vs All the Rest

- AWS Certification Cheat Sheet for Amazon S3

Subscribe to our blog

Thanks for subscribing to the blog.

December 28, 2021

Topics: Cloud Volumes ONTAP Storage EfficienciesAWSAdvanced8 minute read

As one of the most popular storage solutions in the cloud, Amazon S3 storage is used by many enterprise and corporate organizations around the world for storing their valuable data.

Managing this data effectively based on the lifecycle of the data is a key requirement in order to ensure cost-efficiency. Amazon S3 Lifecycle configuration is a simple set of rules that can be used to manage the lifecycle of such data on S3, in an automated fashion.

This article will take a look at the concept of AWS S3 versioning and the Amazon S3 lifecycle configurations as a concept. It will then show how to leverage the lifecycle configuration to expire and delete objects in order to save cloud storage costs.

Use the links below to jump down to the sections on:

- What Is Amazon S3 Object Versioning?

- Amazon S3 Lifecycle Configuration

- Leveraging S3 Lifecycle Management Configurations to Delete S3 Objects

- How to Set Up an S3 Lifecycle Policy to Delete Objects

- Do Even More with AWS S3 Object Storage

What Is Amazon S3 Object Versioning?

In order to fully understand all the different applications of the Amazon S3 lifecycle configurations, it is important to understand the concept of S3 bucket version control first.

The Amazon S3 versioning feature allows users to keep multiple versions of the same object in an S3 bucket for rollback or recovery purposes.

When an Amazon S3 bucket is enabled for versioning, each object in the bucket is given a version identifier that changes each time the object changes or is overwritten. When an object is overwritten, this new object will be the current version while the older file will have an older version ID.

Older versions can be leveraged by users to restore objects that are deleted or overwritten as needed. When an S3 object is deleted by the end user/application, Amazon S3 will insert a delete marker. This delete marker in effect becomes the object’s current version.

While S3 versioning is handy for various recovery purposes, it does mean that once enabled, older objects which were deleted or overwritten continue to consume the underlying storage capacity, incurring consumption costs.

Amazon S3 Lifecycle Configuration

AWS S3 lifecycle configuration is a collection of rules that define various lifecycle actions that can automatically be applied to a group of Amazon S3 objects. These actions can be either transition actions (which makes the current version of the S3 objects transition between various S3 storage classes) or they could be expiration actions (which defines when an S3 object expires).

What Are S3 Transition Actions?

Transition actions are typically a set of rules that can be configured to make current versions of the S3 objects move/tier between various storage classes upon reaching a specific lifetime (in number of days).

For example, a transition lifecycle rule action can be set to automatically move Amazon S3 objects from the default S3 standard tier to Standard-IA (Infrequent Access) 30 days after they were created in order to reduce S3 storage costs. The same rule can also be configured to archive the same objects after another three months by automatically moving them from S3 Standard-IA to Glacier or Glacier Deep Archive to further reduce storage costs.

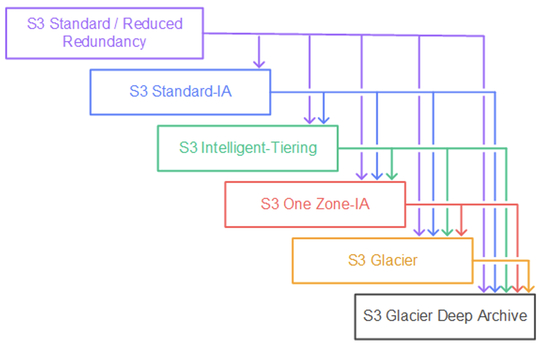

Amazon S3 storage tier transitions can follow the waterfall model as illustrated below:

Source: AWS

Source: AWS

When the lifecycle patterns of data are known clearly, customers can select specific storage classes for the data to transition to. When the lifecycle patterns of data are not clearly known, data can be transitioned to the S3 Intelligent-Tiering class instead, where Amazon S3 will manage the tiering behind the scenes.

This ability for S3 objects to be tiered into different storage classes with different cost structures allows organizations to potentially reduce their cloud storage costs, throughout the data’s lifecycle.

Consider an example of an expense claim system that frequently uploads images of receipts to Amazon S3. These images are often frequently accessed during the first 30-60 days of their existence for validating and processing the expense claim. But after this initial period, where the expenses claim has been processed and paid out, the images of the receipts are no longer frequently accessed. They can now safely be moved off to the less-expensive S3 Infrequent Access tier to enable cost savings.

Once the infrequent access period also elapses (for example, once a back-end quarterly accounting cycle has completed), the images of these receipts will no longer need to be accessed. They can now be automatically moved off to cheaper archive tiers such as Glacier for long-term storage.

What Are S3 Expiration Actions?

Similar to transition actions, expiration actions enable customers to define when the current version of S3 objects expire (which will automatically remove them from the S3 bucket). S3 expiration actions within the lifecycle policy allow users to permanently delete noncurrent versions of S3 objects from the bucket or permanently delete previously expired objects, freeing up storage space utilization and reducing ongoing cloud storage costs.

It is important to note that the actual removal of an expired Amazon S3 object is an asynchronous process where there could be a delay between when an object is marked for expiry and when it is actually removed / deleted. However, users are not charged for the storage of expired objects.

Leveraging S3 Lifecycle Management Configurations to Delete S3 Objects

Amazon S3 objects, including older versions of the objects, continue to incur storage consumption costs unless they are deleted promptly. Many organizations leveraging Amazon S3 with versioning find that their storage costs exponentially increase due to the underlying S3 objects not actually being deleted from the underlying storage platform due to the effect of object versioning. These costs can compound over time as new S3 objects are created / added and existing objects are overwritten.

AWS SDK and AWS Command Line Interface or the Amazon S3 console provide ways to delete S3 objects or expired options either manually or programmatically. However, the process can be cumbersome and can include additional code or admin efforts to be used at scale.

S3 lifecycle configurations enable users to address this issue conveniently instead. Expiration actions found within the Amazon S3 lifecycle configuration can be configured to automatically delete the previous versions as well as the expired versions of S3 objects. This all happens with no user involvement, saving significant time and effort for enterprise organizations that can leverage this at scale to reduce their underlying storage footprint. This also reduces the associated storage consumption costs without the need for any additional administrative overhead.

There are three specific Amazon S3 lifecycle expiration actions that can be leveraged by customers:

- Expiring current version of the object: This configuration allows users to automatically expire the current version of the Amazon S3 objects stored within the bucket after a specified number of days.

In the same example of the expense claim system, we referenced above, all images of expense receipts that are older than ‘X’ (X = number of days for the data to be retained based on the compliance requirements) can be automatically expired from the Glacier archival storage using this method. This will stop AWS S3 storage costs from incurring from that point onwards. - Permanently delete noncurrent version of the objects: This enables users to permanently remove the older versions of the S3 objects inside a bucket automatically after a certain period of time (days), with no user involvement.

- Delete expired object delete markers and failed multipart uploads: This configuration allows users to remove “delete object markers” or to stop and remove any failed multi-part uploads, if they are not completed within a specified period (days), which will save storage costs.

How to Set Up an S3 Lifecycle Policy to Delete Objects

S3 Objection expiration lifecycle configuration can be created using a number of different tools: AWS CLI tool, AWS SDK, the Amazon S3 console, or RESTful API calls. Please refer to the Amazon S3 lifecycle user guide for detailed step-by-step information.

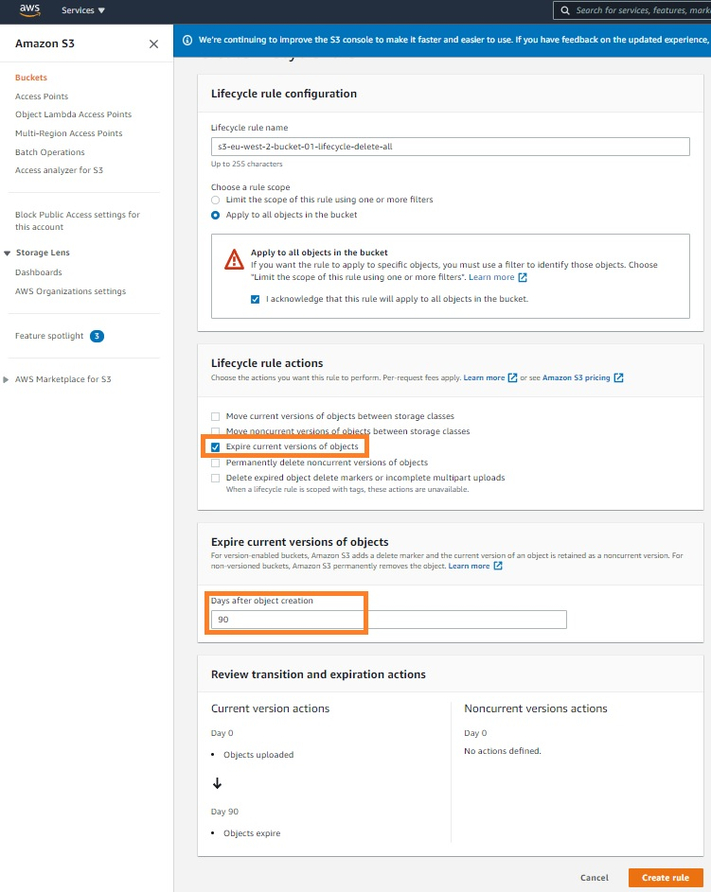

The screenshot below shows how the Amazon S3 console UI (accessed via the “Management” tab within the S3 bucket) can be leveraged to configure a S3 lifecycle rule to expire the current version of S3 objects. For the purpose of this example, expiration has been set to kick in after 90 days of the object creation for the whole bucket.

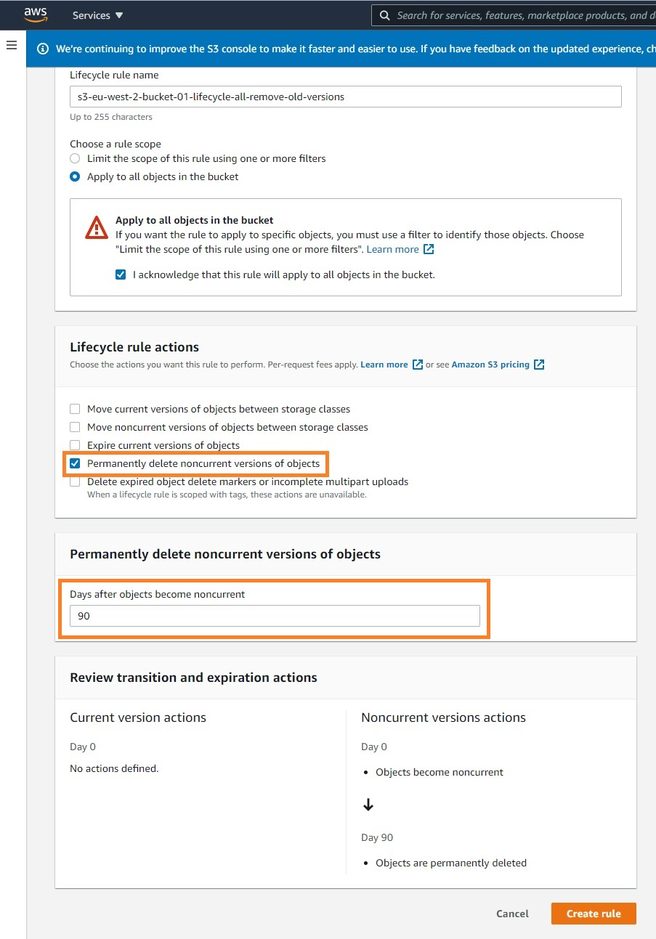

For removing older versions of S3 objects, the same AWS console can be easily leveraged to create the lifecycle rule, as illustrated below.

Do Even More with AWS S3 Object Storage

When it comes to Amazon S3 lifecycle rules, transitioning actions and expiration actions both provide customers with convenient, scalable, policy-driven approaches to reduce storage consumption and overhead costs.

Customers planning on leveraging these configurations are advised to reference the AWS S3 documentation here.

If you are concerned about storage efficiency, you might also be interested in NetApp Cloud Volumes ONTAP for AWS. Available also for Azure and Google Cloud, Cloud Volumes ONTAP gives users data management features that aren’t available in the public cloud, including space- and cost efficient storage snapshots and instant, zero-capacity data cloning.