More about Azure Migration

- Application Migration to Azure: 4 Approaches and One Migration Tool

- Azure Cloud Adoption Framework: The 9 Methodologies Explained

- 3 Ways to Create an Azure Migrate Project

- Azure Migration Step by Step: Discover, Migrate, Optimize, and Monitor

- Migrate SQL Server to Azure: Options, Tools, and a Quick Tutorial

- Migrate Databases to Azure: 3 Quick Tutorials

- 4 Ways to Migrate SQL to Azure

- Azure Migration Program: 4 Key Elements

- Azure Migrate: Key Components and a 4-Step Migration Plan

- 5 Azure Data Migration Tools You Should Be Using

- Azure Migration Tools: One-Click Migration for VMs and Data

- Azure vs AWS Pricing: A Quick Comparison

- How to Upload Files to Azure Blob Storage with AzCopy, PowerShell, and More

- Azure Managed Service Provider: How to Save Time and Reduce Cloud Overhead

- Azure Case Studies with Cloud Volumes ONTAP

- Azure Migration Strategy: Four Steps to the Cloud

- 11-Step Azure Migration Checklist

- Azure Migration: The Keys to a Successful Enterprise Migration to Azure

- Moving Clouds: Migration from AWS to Azure and Azure to AWS

- Azure Storage Replication with SnapMirror

Subscribe to our blog

Thanks for subscribing to the blog.

August 25, 2020

Topics: Cloud Volumes ONTAP AzureData MigrationAdvanced9 minute read

Migrating data from an existing repository to Azure Blob and keeping data in sync in hybrid deployments can both be significant hurdles in many organizations’ cloud journeys. There are several Azure-native and third-party tools and services to help migrate data to Azure, the most popular ones being AzCopy, Azure Import/Export, Azure Powershell, and Azure Data Box. How can you know which is the right choice for your Azure migration?

Selecting the right tools is dependent on several factors, including timelines for migration, data size, network bandwidth availability, online/offline migration requirements, and more. This blog will share and explore some of these Azure migration tools and the simple steps on how to easily migrate files to Azure Blob storage, all of which can be enhanced with the help of NetApp Cloud Volumes ONTAP’s advanced data management capabilities for data migration, performance, and protection in Azure Blob storage.

Click ahead for more on:

Tools to Upload Data to Azure Blob Storage

With data migration and mobility being critical components of cloud adoption, Microsoft offers multiple native tools and services to support customers with these processes. Let’s explore some of these tools in detail.

AzCopy is a command-line utility used to transfer data to and from Azure storage. It is a lightweight tool that can be installed on your Windows, Linux, or Mac machines to initiate the data transfer to Azure. AzCopy can be used in a number of scenarios, for transferring data from on-premises to Azure Blob and Azure Files or from Amazon S3 to Azure storage. The tool can also be used for data copy to or from Azure Stack as well.

Click to learn How to Upload Data to Azure Using AzCopy

Azure PowerShell is another command line option for transferring data from on-premises to Azure Blob storage. The Azure PowerShell command Set-AzStorageBlobContent can be used to copy data to Azure blob storage.

Click ahead for Azure PowerShell and How to Use It

Azure Import/Export is a physical transfer method used in large data transfer scenarios where the data needs to be imported to or exported from Azure Blob storage or Azure Files In addition to large scale data transfers, this solution can also be used for use cases like content distribution and data backup/restore. Data is shipped to Azure data centers in customer-supplied SSDs or HDDs.

Azure Data Box uses a proprietary Data Box storage device provided by Microsoft to transfer data into and out of Azure data centers. The service is recommended in scenarios where the data size is above 40 TB and there is limited bandwidth to transfer data over the network. The most popular use cases are one-time bulk migration of data, initial data transfers to Azure followed by incremental transfers over the network, as well as for periodic upload of bulk data.

How to Upload Files to Azure Blob Storage Using AzCopy

AzCopy is available for Windows, Linux, and MacOS systems. There is no installation involved as AzCopy runs as an executable file. The zip file for Windows and Linux needs to be downloaded and extracted to run the tool. For Linux, the tar file has to be downloaded and decompressed before running the commands.

The AzCopy tool can be authorized to access Azure Blob storage either using Azure AD or a SAS token. While using Azure AD authentication, customers can choose to authenticate with a user account before initiating the data copy. While using automation scripts, Azure AD authentication can be achieved using a service principal or managed identity.

In this walkthrough of AzCopy we will be using authentication through an Azure AD user account. The account should be assigned either the storage blob data contributor or the Storage Blob Data Owner role in the storage container where the data is to be copied, as well as in the storage account, resource group, and subscription to be used.

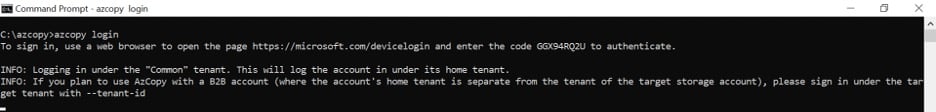

1. Browse to the folder where AzCopy is downloaded and run the following command to login:

azcopy login

You will now see details about how to log in to https://microsoft.com/devicelogin. Follow the instructions in the output and use the code provided to authenticate.

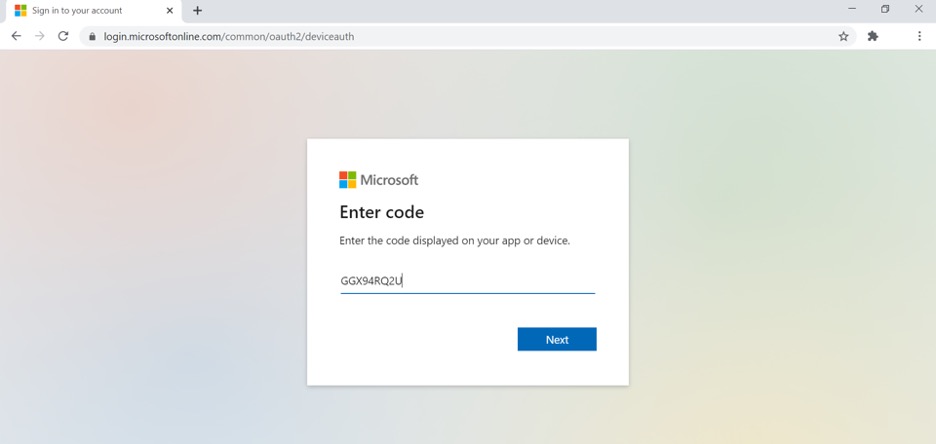

2. On the login page, enter your Azure credentials with access to the storage and click on “Next.”

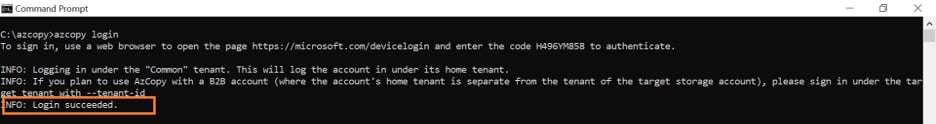

3. Back in the command line, you will receive a “login succeeded” message.

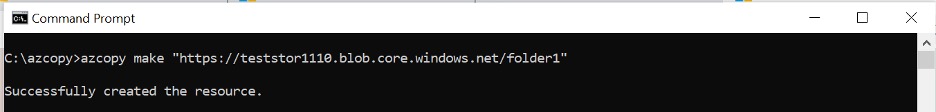

- Execute the following AzCopy command to create a container in the storage account to upload files:

azcopy make “https://<azure storage account name>.blob.core.windows.net/<container>”

Update the <Azure storage account name> placeholder with name of the storage account in Azure and <container> with the name of the container you want to create. Below, you can see a sample command:

azcopy make "https://teststor1110.blob.core.windows.net/folder1"

- To copy a file from your local machine to Storage account

azcopy copy <Location of file in local disk> “https://<azure storage account name>.core.windows.net/<container>/”

Update the <Local of file in local disk> and <Azure storage account name> placeholders in the above command to reflect values of your environment, and <container> with the name of the storage container you created in step 4.

Sample command given below:

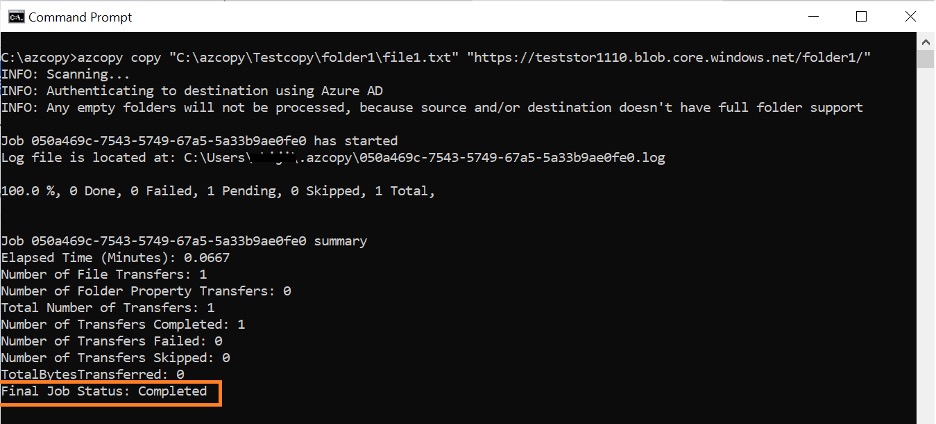

azcopy copy 'C:\azcopy\Testcopy\folder1\file1.txt' 'https://teststor1110.blob.core.windows.net/folder1'

Note: In the above example folder1 in the above command is the container that was created in step 4.

Upon successful completion of the command, the job status will be shown as Completed.

- To copy all files from a local folder to the Azure storage container run the following command:

azcopy copy "<Location of folder in local disk>" 'https://<azure storage account name>.blob.core.windows.net/<container>' --recursive

Update the <Location of folder in local disk>, <Azure storage account name>, and <container> placeholders in the above command to reflect values of your environment. Sample command given below:

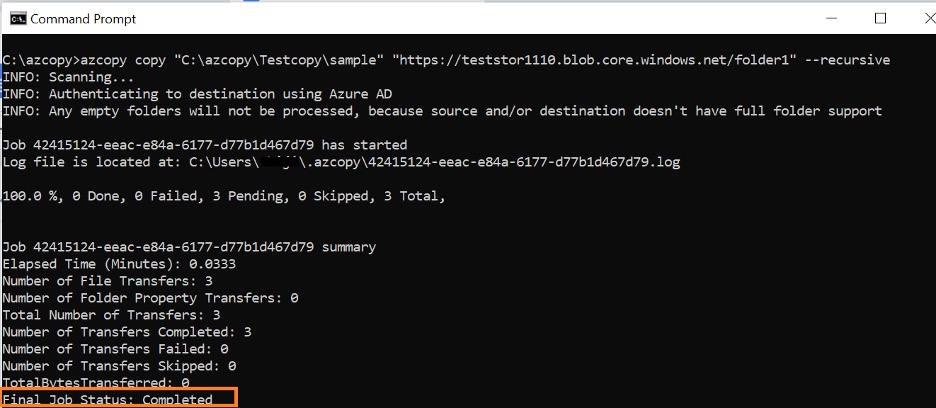

azcopy copy "C:\azcopy\Testcopy\sample" "https://teststor1110.blob.core.windows.net/folder1" --recursive

Your source folder content will appear as below:

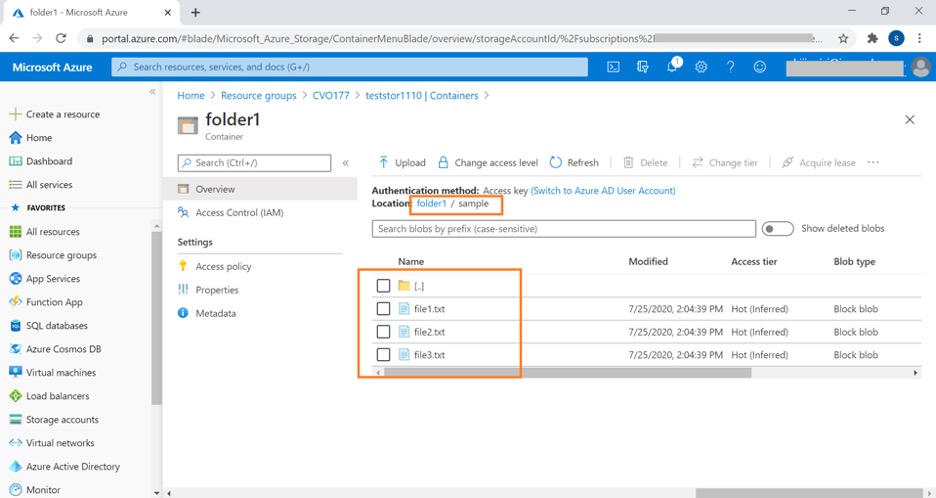

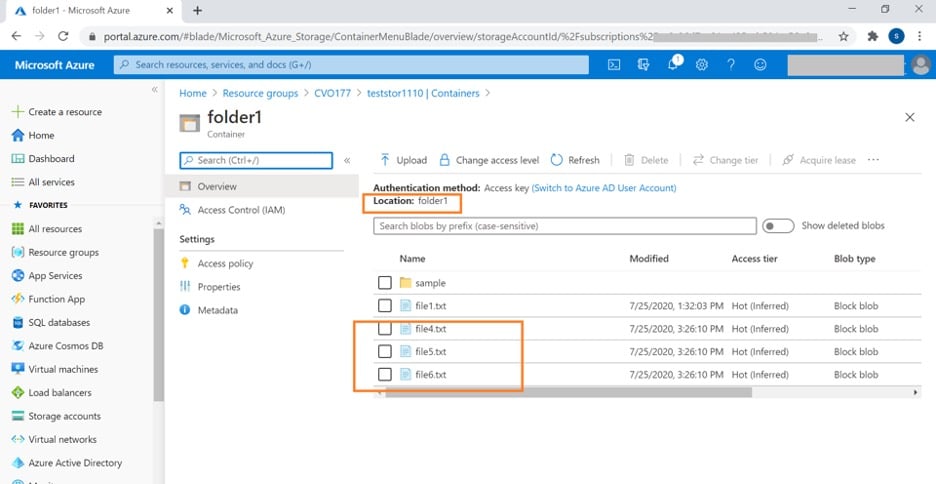

- If you browse to the Storage account in the Azure portal, you can see that the folder has been created inside the Azure storage container and that the files are copied inside the folder.

- To copy contents of the local folder without creating a new folder in Azure storage, you can use the following command:

azcopy copy "<Location of folder in local disk>/*" 'https://<azure storage account name>.blob.core.windows.net/<container>'

Sample command given below:

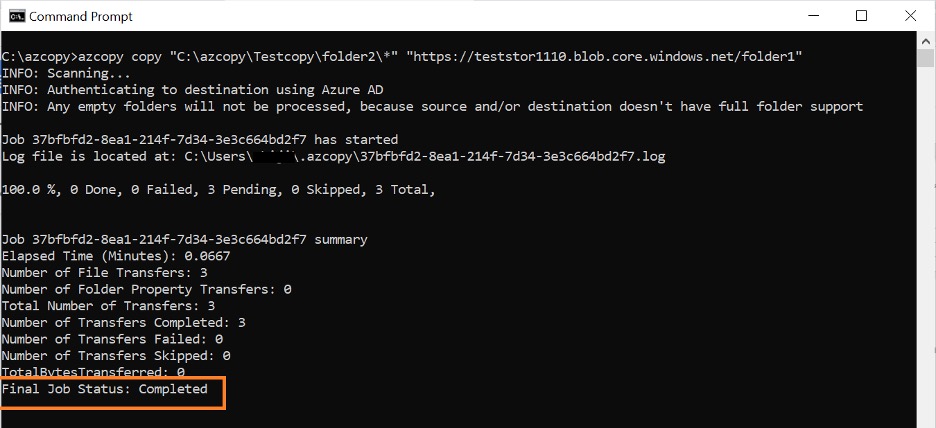

azcopy copy "C:\azcopy\Testcopy\folder2\*" "https://teststor1110.blob.core.windows.net/folder1"

- The additional files are copied from the local folder named folder2 to the Azure container folder1, as shown below. Note that the source folder is not created in this case.

What Is Azure PowerShell and How to Use It

Azure PowerShell cmdlets can be used to manage Azure resources from PowerShell command and scripts. In addition to AzCopy, Powershell can also be used to upload files from a local folder to Azure storage. The Azure PowerShell command Set-AzStorageBlobContent is used for the same purpose.

File Transfers to Azure Blob Storage Using Azure PowerShell

In this section we will look into the commands that can be used to upload files to Azure blob storage using PowerShell from a Windows machine.

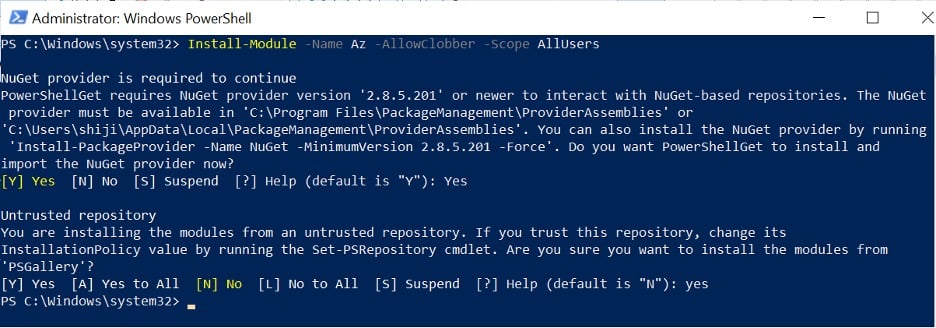

1. Install the latest version of Azure PowerShell for all users on the system in a PowerShell session opened with administrator rights using the following command:

Install-Module -Name Az -AllowClobber -Scope AllUsers

Select “Yes” when prompted for permissions to install packages.

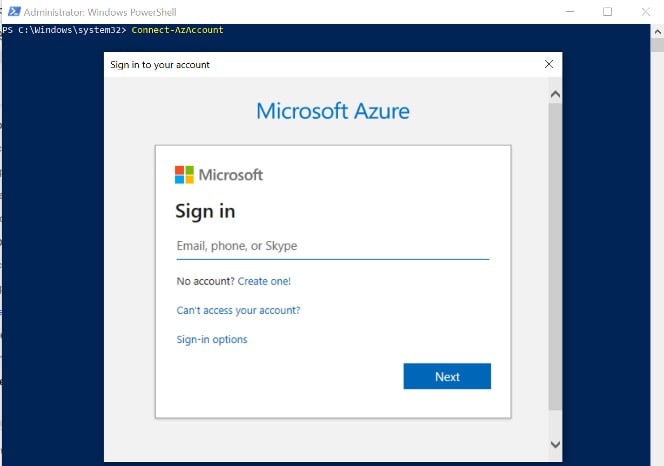

2. Use the following command and sign-in to your Azure subscription when prompted:

Connect-AzAccount

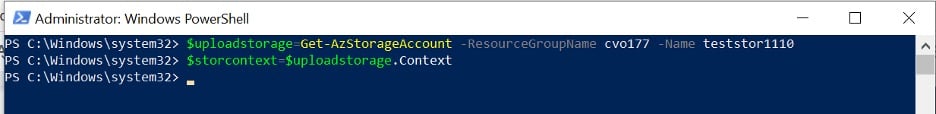

- Get the storage account context to be used for the data transfer using the following commands:

$uploadstorage=Get-AzStorageAccount -ResourceGroupName <resource group name> -Name <storage account name>

$storcontext=$uploadstorage.Context

Update the place holders <resource group name> and <storage account name> with values specific to your environment, as in the sample command given below:

$uploadstorage=Get-AzStorageAccount -ResourceGroupName cvo177 -Name teststor1110

$storcontext=$uploadstorage.Context

- Run the following command to upload a file from your local directory to a container in Azure storage:

Set-AzStorageBlobContent -Container "<storage container name>" -File "<Location of file in local disk>" -Context $storcontext

Replace the placeholders <storage container name> and <Location of file in local disk> with values specific to your environment. Sample given below:

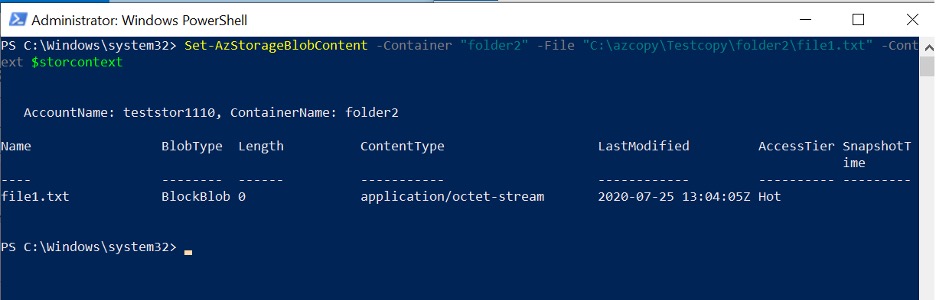

Set-AzStorageBlobContent -Container "folder2" -File "C:\azcopy\Testcopy\folder2\file1.txt" -Context $storcontext

Once the file is uploaded successfully, you will get a message similar to what you can see in the screenshot below:

- To upload all files in the current folder, run the following command

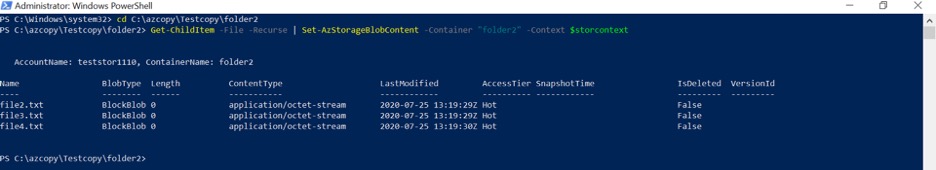

Get-ChildItem -File -Recurse | Set-AzStorageBlobContent -Container "<storage container name>" -Context $storcontext

Sample command given below:

Get-ChildItem -File -Recurse | Set-AzStorageBlobContent -Container "folder2" -Context $storcontext

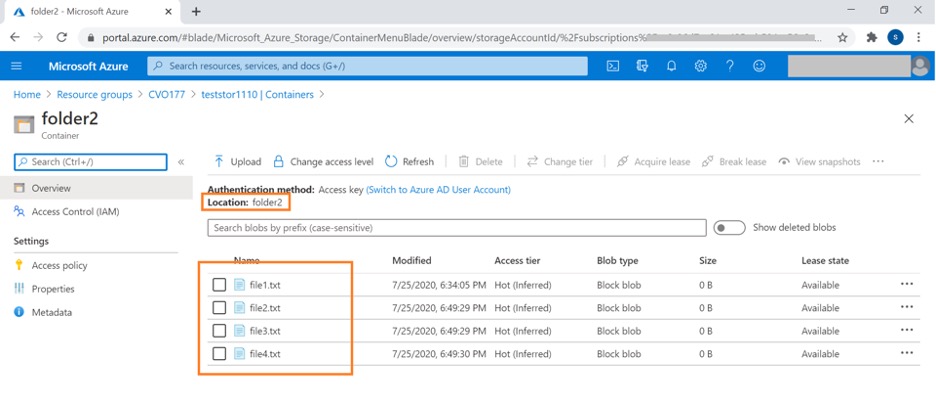

- If you browse to the Azure storage container, you will see all the files uploaded in steps 4 and 5.

NetApp Cloud Volumes ONTAP: Accelerate Cloud Data Migration

We have discussed how data migration to Azure can be easily achieved using AzCopy and Azure PowerShell commands. Customers can also leverage NetApp Cloud Volumes ONTAP for data migration to the cloud through trusted NetApp replication and cloning technology. Cloud Volumes ONTAP delivers a hybrid data management solution, spanning on-premises as well as multiple cloud environments.

Cloud Volumes ONTAP is distinguished by the value it provides to its customers through high availability, data protection, and storage efficiency features such as deduplication, compression and thin provisioning. Cloud Volumes ONTAP volumes can be accessed by virtual machines in Azure over SMB/NFS protocols and helps in achieving unparalleled storage economy through these features. As the storage is being used more efficiently, Azure storage cost is also reduced considerably.

NetApp Snapshot™ technology along with SnapMirror® data replication can ease up the data migration from on-premises environments to the cloud. While SnapShot technology can be used to take Point-in-time backup copies of data from on-premises NetApp storage, SnapMirror data replications helps to replicate them to Cloud Volumes ONTAP volumes in Azure. The service can also be used to keep data between on-premises and cloud environments in sync for DR purposes.

NetApp FlexClone® data cloning technology helps in creating storage efficient writable clones of on-premises volumes that can be integrated into CI/CD processes to deploy test/dev environments in the cloud. This enhances data portability from on-premises to cloud and also within the cloud, which can all be managed from a unified management pain. Thus, Cloud Volumes ONTAP helps organizations achieve agility and faster time to market for their applications.

Another NetApp data migration service is Cloud Sync, which can quickly and efficiently migrate data from any repository to object-based storage in the cloud, whether it’s from an on-prem system or between clouds.

Conclusion

Customers can choose from native tools like AzCopy and Azure PowerShell to upload files to Azure Blob Storage. They can also leverage Cloud Volumes ONTAP for advanced data management and migration capabilities using features like SnapMirror replication, NetApp Snapshots and FlexClone.