Subscribe to our blog

Thanks for subscribing to the blog.

August 10, 2021

Topics: Cloud Tiering Data TieringAdvanced6 minute read

These days, hybrid cloud environments are a common blueprint in IT infrastructure setups. They offer numerous advantages, such as a broad range of services, immediate scalability, and pay-as-you-go models. Performance has also been a main consideration when connecting on-prem networks to their cloud-based resources. How can you connect an on-prem network to the cloud and what are the different performance impacts this choice is going to have on your system?

NetApp’s easy bridge between on-prem and cloud storage, the Cloud Tiering service, raises similar questions. If you’re planning on using Cloud Tiering, what performance can you expect when retrieving the tiered data or just reading it in case you need it?

In this article we are going to go over these commonly asked questions and introduce you to the Cloud Performance Test, which offers performance visibility for data moved to and moved from the cloud with Cloud Tiering.

How Do On-Premises Systems Connect to the Cloud?

On a high level, there are three main ways in which you can connect your on-prem environment to the cloud:

Let’s take a look at each of them below.

Public internet connections

The easiest way to access cloud-based resources is by using public IPs assigned to your cloud-based servers or instances. Traffic between on-prem and the cloud-based services will go through public infrastructure which is less secure and more volatile in terms of latency times. In this model you do not control exactly how traffic is routed from one endpoint to another, therefore performance here will tend to be variable.

While some apps can withstand the disadvantages that come with the public internet, such as a simple email application, others will require direct connections.

Direct links

Major cloud providers offer links that connect your local on-prem network directly to their cloud data centers and resources. These direct connections do not go through the public internet—they bypass the public internet service providers, thereby offering faster speeds, more security, and consistently low latency times.

These are the main options for establishing a dedicated private connection offered by the major cloud providers:

- AWS Direct Connect: AWS makes it possible for you to connect your network directly to the nearest cloud region that is already connected to the other regions, with either of two options: Dedicated connections for a direct Ethernet point-to-point connection of 1, 10, or 100 Gbps, and Hosted connections provided through AWS Direct Connect Partners with preconfigured speeds that go from 50Mbps to 10Gbps.

- Azure ExpressRoute: ExpressRoute lets you extend your on-premises network by creating a private connection to Azure. These connections either go through peers or service providers or they can be directly to the Microsoft cloud. ExpressRoute offers four different connectivity models: CloudExchange Co-location, a point-to-point Ethernet Connection, Any-to-Any (IPVPN) networks, and ExpressRoute Direct. Bandwidth options with these models range from 50mbps all the way up to a dual 100 Gbps connectivity with ExpressRoute Direct.

- Google Cloud Interconnect: similar to AWS and Azure, Google offers a highly available, low-latency connection to Google’s network. You can extend your on-premises network to transfer data reliably into your Google’s Virtual Private Cloud using either of the two available options: Dedicated Interconnect or Partner Interconnect. Dedicated Interconnect is a direct physical link between your location and Google with options of 10 or 100 Gbps circuits for demanding high-bandwidth connections. If your use case doesn't require such performance you can opt for a Partner Interconnect which offers connectivity through a supported service provider with links starting at 50 Mbps up to 50 Gbps.

These dedicated private links are a good fit for real-time applications which need a reliable network and consistent low latency, such as video and voice apps.

VPN

Connecting your on-prem environment to cloud services using VPN links is an option when increasing security is the main concern and high bandwidth requirements such as those from direct links are not needed.

With VPN connections, all data traffic is encrypted using the industry standard IPsec protocols. In this scenario, traffic traverses the public internet and therefore latency times will still fluctuate. All major providers offer site-to-site VPN connection options and client-based VPNs as well.

Site-to-site connections occur when, for example, there is a VPN tunnel between two routers, both with corporate networks behind them. Google Cloud VPN, Azure VPN Gateway, and AWS Site-to-Site VPN fall into this model. With this topology, end users do not establish the VPN session themselves. On the other hand, with client-based VPN or client-to-site VPN, individual users (such as a laptop user) establish a VPN connection to a VPN access server.

The important idea standing out here is that connecting your network to the cloud with VPNs increases security. However, from a performance perspective, connections might suffer from the inheritances of a public internet route.

Measuring Cloud Tiering Performance with the Cloud Performance Test

Performance is also one of the main concerns for organizations who wish to take advantage of the benefits of NetApp's Cloud Tiering. Once enabled, how long does it take for cold data to be tiered to object storage? And more importantly, how long is it going to take to retrieve it if needed?

You always want to set clear expectations on retrieval times. Retrieving data through a direct connect link is not going to be the same as through a public routed network, but how can you measure exactly Cloud Tiering’s performance?

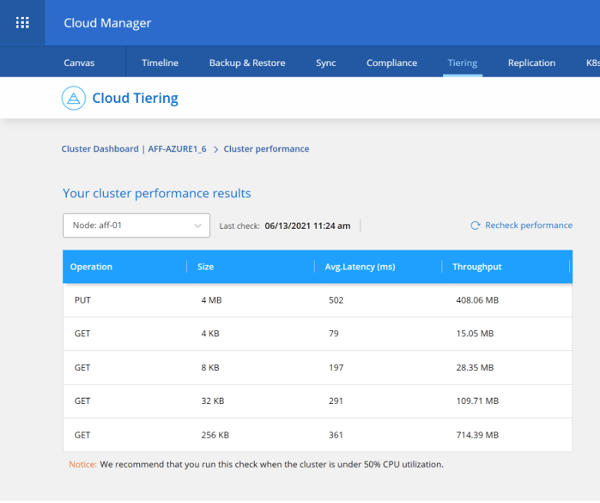

Fortunately, NetApp has a solution for this: Cloud Performance Test. By leveraging ONTAP’s object storage profiler, the Cloud Performance Test allows you to test latency and throughput performance of object stores before (or after) you attach them to your ONTAP cluster’s aggregates as cloud tiers. This tool has been around since ONTAP 9.4 but until now it’s only been available through ONTAP CLI. Now, it is available through the Cloud Tiering service, so you can easily test your network performance for data tiering directly from Cloud Manager.

Once a cloud performance test is instantiated, this tool sends thousands of PUT and GET requests to your object storage provider in order to simulate either upload or download of data. In the case of PUT (write) requests, it mimics 4MB objects, the object size Cloud Tiering uploads when sending data to the cloud (Note: the object size is fixed at 4MB based on performance recommendations from the cloud providers).

When reproducing GETS, the Cloud Performance Test downloads different data segment sizes of 4, 8, 32 and 256 KB and provides average latency times along with a throughput measurement. With the provided information you get clear numbers on what to expect from Cloud Tiering in any connectivity scenario.

In addition, regardless of the connection type, Cloud Tiering optimizes your read request performance and egress charges. In case cold data is read from the cloud tier, only the actual 4 KB blocks needed are retrieved, not an entire file or object. This adds to a better performance when pulling data from object storage. Also, it should be mentioned that the challenge of internet connections obviously won’t be as relevant when tiering to a NetApp StorageGRID device.

For step-by-step instructions of running a Cloud Performance Test go to the Test cloud performance page on the Cloud Manager Documentation.

Conclusion

Whether your hybrid cloud connectivity model goes through the public internet, through a direct connection to your cloud provider, or on a VPN, the Cloud Performance Test and the object storage profiler make it easy for you to measure exactly the Cloud Tiering performance when retrieving data back to the hot tier.